Hope Is Not a Workflow

We posted two AI-forward roles on Substack. Here is what showed up in the DMs.

A few weeks ago I posted two job openings on Substack.

Not LinkedIn’s hiring platform.

Not Indeed’s hiring platform.

Not every University hiring platform.

Substack.

The application was one message.

Thirty words, maybe fifty. Over a thousand people read the post.

The DMs started the same day.

This is the midway report. We are still interviewing. I am writing this from inside the process, not after it. I will share the full retrospective when we are done. But the patterns that showed up are too useful to sit on, and some of them are about mistakes I made, not just things I learned about candidates.

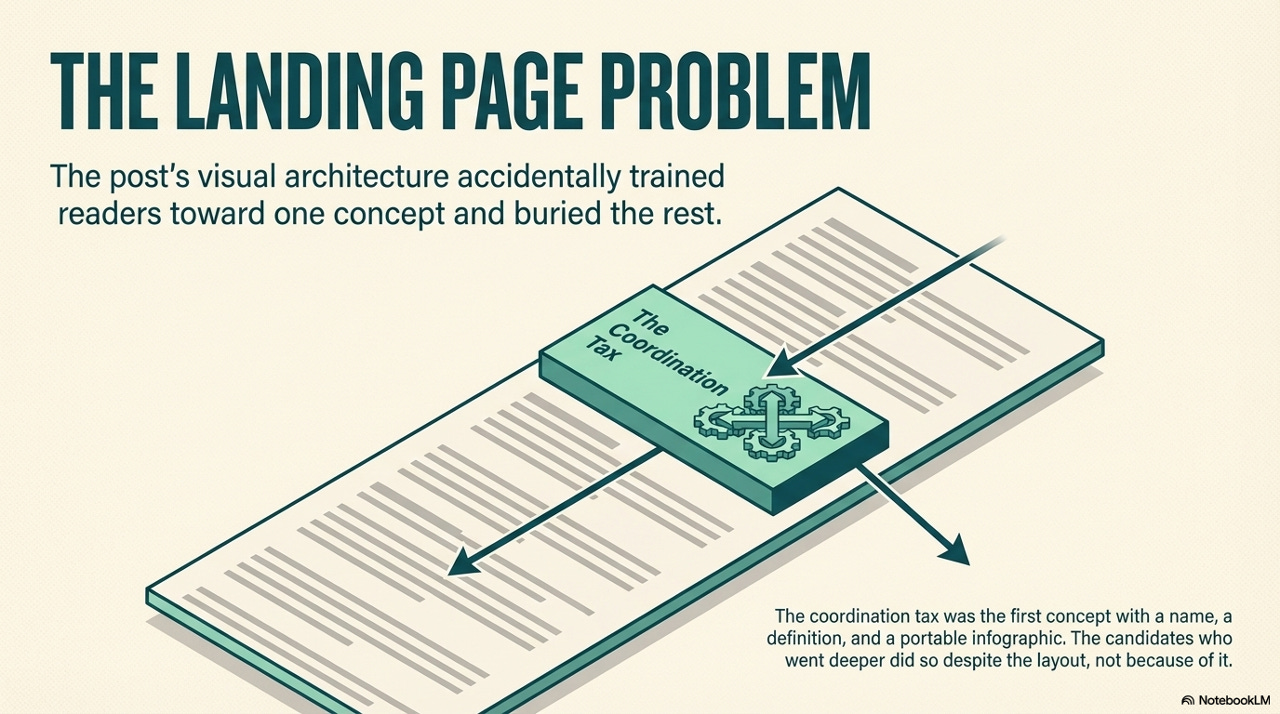

I built a landing page inside a blog post

The original post was 2,500 words. It contained at least ten distinct ideas. The coordination tax. Trust-building versus production mindset. Data stewardship. Four specific video use cases. The ownership framing: the job is what they shape.

Almost every candidate grabbed the same phrase.

I did not expect that. Then I went back and looked at the post the way a reader would scroll it, and I understood why.

The coordination tax is the first concept in the piece that has a name, a definition, and a full-screen infographic sitting right below it. The image is isometric, labelled, self-contained. It has the definition built into a callout box. A reader hits that image three scrolls in and their brain says: I have the concept. I can respond now.

Everything else in the post, the trust-versus-production argument, the data stewardship framing, the four video scenarios, the ownership model, lives in prose. No visual anchor. No portable label with a picture attached. Those ideas require more reading to extract. The coordination tax requires one good scroll.

I made it easy to grab. The infographic, the definition, the label. It was designed to be portable. The candidates who went past it did so on their own.

That turned out to be the filter I did not design.

I put ten ideas in a post. One of them became the password. Candidate after candidate referenced it, pitched against it, built their message around it. The rest of the post went mostly untouched.

This is not a complaint about the candidates. It is an honest accounting of what I built. The post’s architecture trained readers toward one concept and made every other concept harder to reach. The people who went deeper went deep despite the layout, not because of it.

If you are a nonprofit leader building a hiring process, that is worth sitting with. The way someone reads your material tells you something about how they will engage with your mission. But the way you design the material shapes what they can find. Both things are true at the same time.

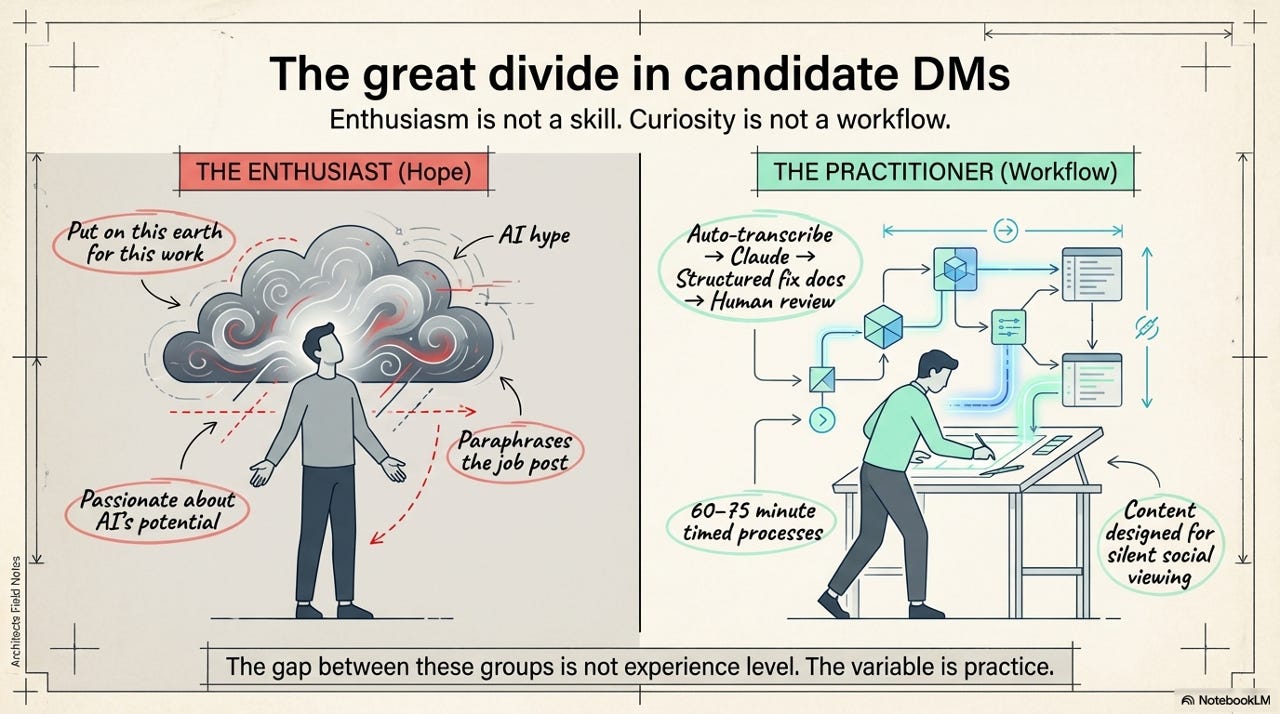

Hope versus workflow

The DMs split into two categories almost instantly. People who told me what AI could do. And people who showed me what they had already built with it.

The first group wrote about potential. They talked about being passionate, about believing AI would reshape design or analytics, about being “quite proficient” with AI tools. Some referenced university courses. Some paraphrased the post’s own arguments back as their pitch. One candidate said they were “put on this earth” for this work. The enthusiasm was real. The evidence was not.

The second group wrote about practice. One named five AI tools with specific daily use cases, not just the tool names but what each one does inside a production workflow. Another described a timed process: sixty to seventy-five minutes to turn a real story into a donor-ready video, start to finish. Another had built a pipeline where meeting recordings get auto-transcribed, fed to Claude, structured into fix documents, implemented through Claude Code, and reviewed by a human before shipping. One designed all their content for silent viewing first because they know that is how social media actually gets consumed.

The gap between these two groups is not experience level. Some of the strongest candidates are new grads. Some of the weakest have years on their resume with “AI” listed as a skill. The variable is practice.

Enthusiasm is not a skill. Curiosity is not a workflow.

The sector has been hiring for potential for so long that we have forgotten to look for proof. Every nonprofit hiring manager has an inbox full of the first type. The question is whether your process can surface the second.

What the strong ones do differently

The candidates who stood out did not have better resumes. They had a different relationship with the tools.

The first thing you notice is specificity. A weak application says “I use ChatGPT and Claude.” A strong one says “I use Descript to edit and clip audio into story segments, CapCut for pacing and captions optimised for retention, and Notion AI to turn messy research into structured content.” The difference is not vocabulary. It is evidence of a daily practice. Specificity reveals that someone has sat with the tools long enough to know what each one is actually good at.

The second thing is override. The strongest candidates did not just use AI output. They corrected it. One rejected clean synthetic data because real operational data is messy, and deliberately introduced noise to make the exercise realistic. Another stripped down an over-designed multi-chart layout into a single focused page because the AI’s version was trying to impress instead of inform. Another restructured a risk model from binary to three-part because the AI’s version was too simple to be useful. These are people who treat AI as a draft, not a deliverable.

The third thing is research depth. Some candidates built deliverables that could have been for any furniture charity in Canada. Others built around a specific Furniture Bank programme, a specific persona, a specific call to action tied to something real on our website. One candidate created a fictional but detailed donor persona, complete with giving history and lapse reason, and pointed the CTA to a real URL on our site. AI makes production easy. The hard part is knowing what to produce.

Once you see this pattern, you cannot unsee it. And it should make you uncomfortable, because most hiring processes would never surface these signals.

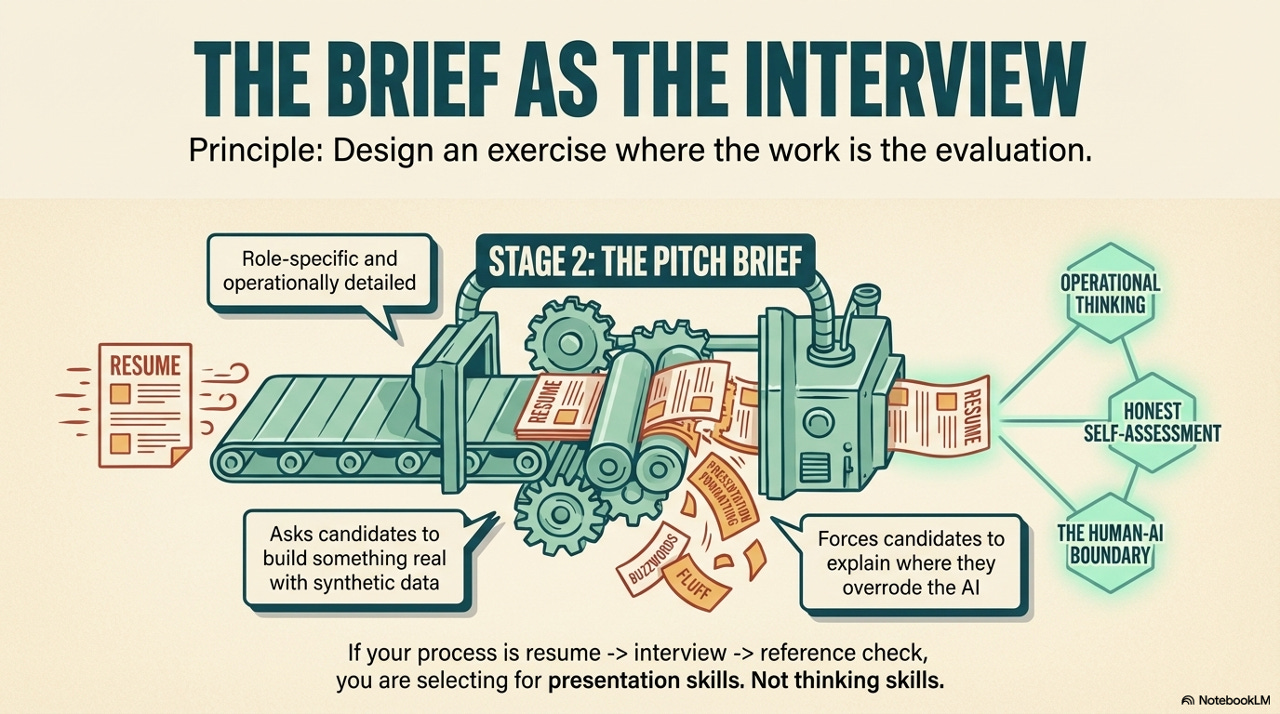

The brief as the interview

The most useful thing we built was not a job posting. It was a pitch brief.

Stage 1 is the DM. Stage 2 is the brief. It is role-specific and operationally detailed. It asks candidates to build something real with synthetic but realistic scenarios. It asks them what they would do on a specific day of the week. It asks what they got wrong and know they got wrong. It asks where AI helped and where they overrode it.

I am not going to share the brief or its questions. The filter needs to keep working. But the principle is transferable: design an exercise where the work is the evaluation.

What the brief revealed went beyond skill. Some candidates researched the actual organisation before starting. They watched our videos. They found our tech stack. They read programme descriptions. Others submitted work that could have been for any nonprofit with a Salesforce instance. One candidate submitted a GitHub repo with a data dictionary, synthetic dataset, working file, and organised submission folder. The professionalism of the deliverable was itself a signal before anyone opened the file. Another spent three and a half hours and submitted an analysis that rejected the obvious approach three different AI tools suggested, because the obvious approach was wrong for the actual problem.

If your hiring process is resume, interview, reference check, you are selecting for presentation skills. Not thinking skills.

What this means for the sector

We are two weeks into interviews. The pipeline is real. And the thing I keep coming back to is how many of these candidates would never have made it through a traditional hiring process.

They came through Substack, not a job board. They were already engaged with the thinking before they applied. The strongest analytics candidate has no nonprofit experience but built a delivery risk model that identified operational assumptions most staff would not catch. The strongest design candidate described a production workflow faster and more specific than what most agencies would pitch. New grads and early-career candidates are outperforming mid-career applicants who have AI on their resume but not in their day.

The sector keeps asking “should we hire for AI skills?” That is the wrong question. The right question is: would your hiring process even recognise AI skills when they show up?

The talent is not missing. The infrastructure to find it is.

What comes next

We are not done. Interviews are happening now. The roles start in May. I will write the full retrospective when it is over.

The hiring committee includes staff from across Furniture Bank. Candidates are meeting the organisation, not just me. I am doing this as CEO alongside everything else, which means the coordination tax of hiring is real too.

Here is what I have learned so far, from the charity trenches, not the theory:

I built a post that accidentally trained people toward one idea and buried the rest. The candidates who went deep went deep on their own. The process I designed to be unfakeable worked, but it also showed me where my own communication created the problem I was trying to filter for. And the talent that showed up, the real talent, the people with workflows and override instincts and research depth, came from places our sector does not usually look.

If you are a nonprofit leader reading this: look at your last three hires. Did your process surface practice, or did it surface presentation? Did anyone build something, or did everyone describe what they would build?

Hope got them to the door. The workflow got them through it.