Compared to What?

50+ sources have cited our 2022 AI photo campaign. Most of them miss the point.

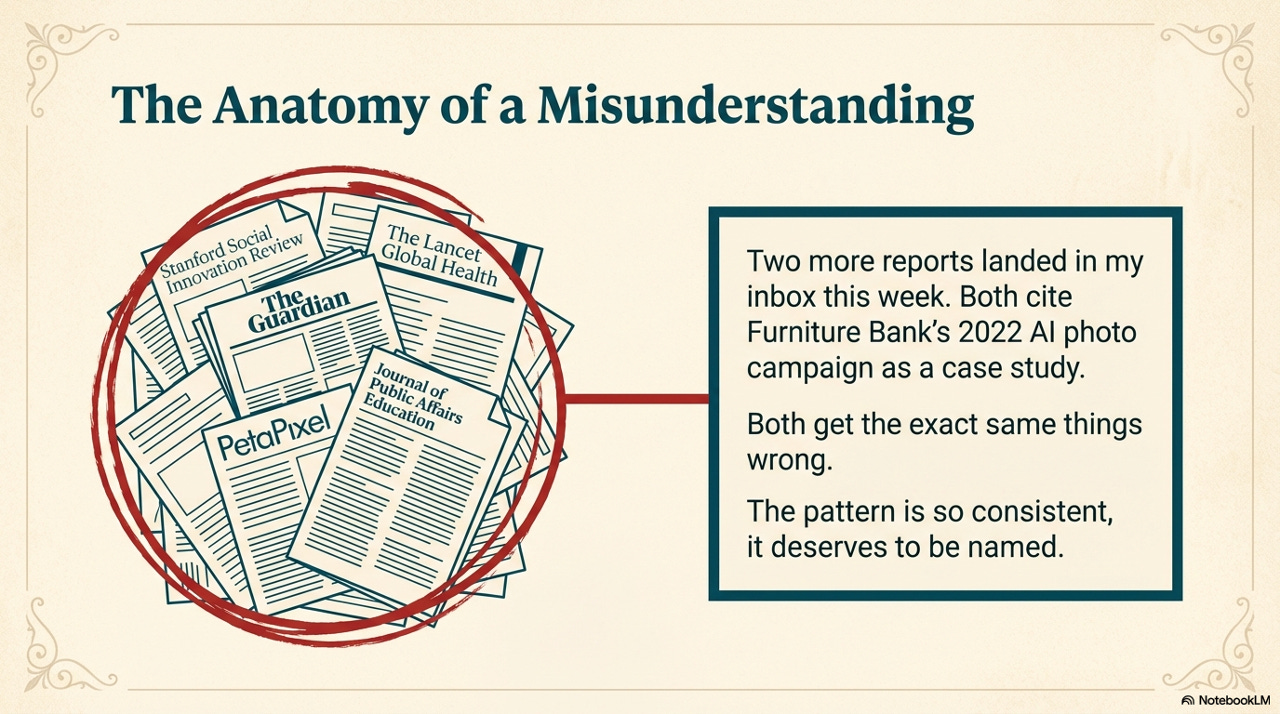

Two more reports landed in my inbox this week. Both cite Furniture Bank as a case study. Both analyse our 2022 AI photo campaign.

Both get the same things wrong.

I stopped counting external references somewhere around fifty. Academic journals. The Guardian. The Lancet Global Health. Stanford Social Innovation Review. Futurism. PetaPixel. A CliffsNotes prompt where undergrads decide whether what we did was “appropriate or not.”

The pattern is so consistent it deserves to be named.

The Campaign

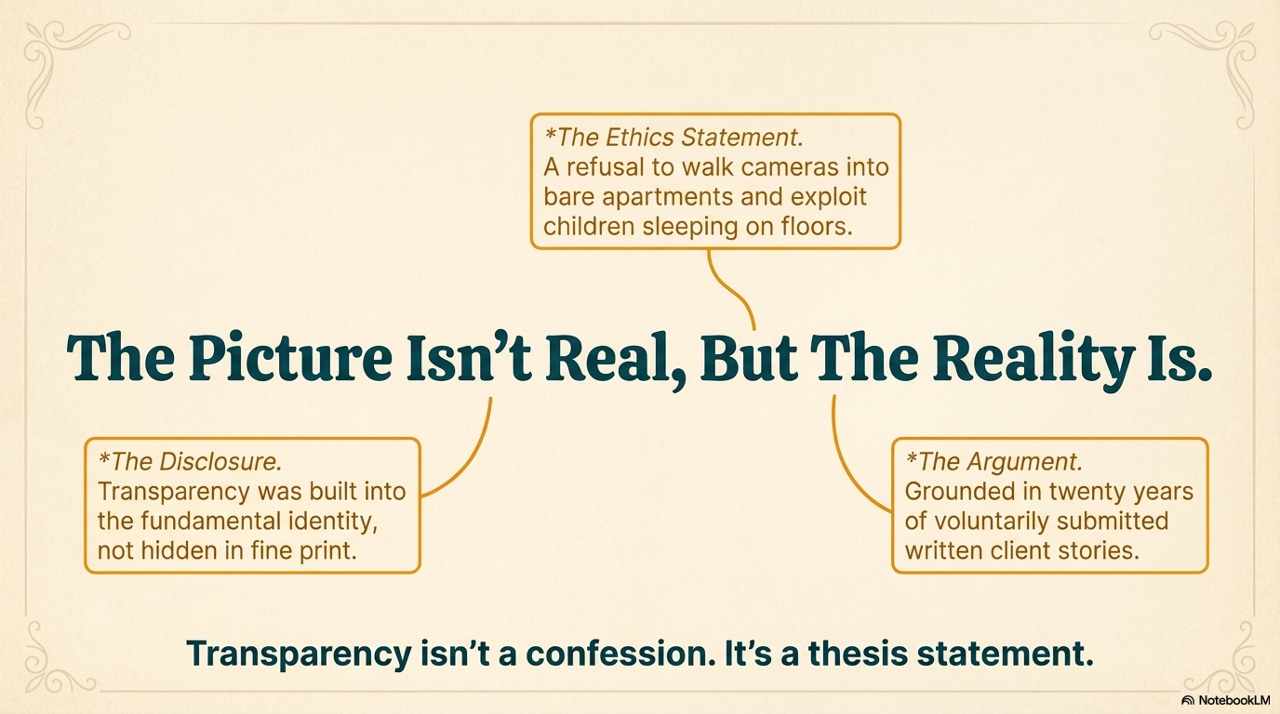

In November 2022, before ChatGPT launched, we sent a postcard to our donors. The images on it were generated by AI. The campaign was called “The Picture Isn’t Real, But The Reality Is.”

That name was a thesis statement, not a tagline.

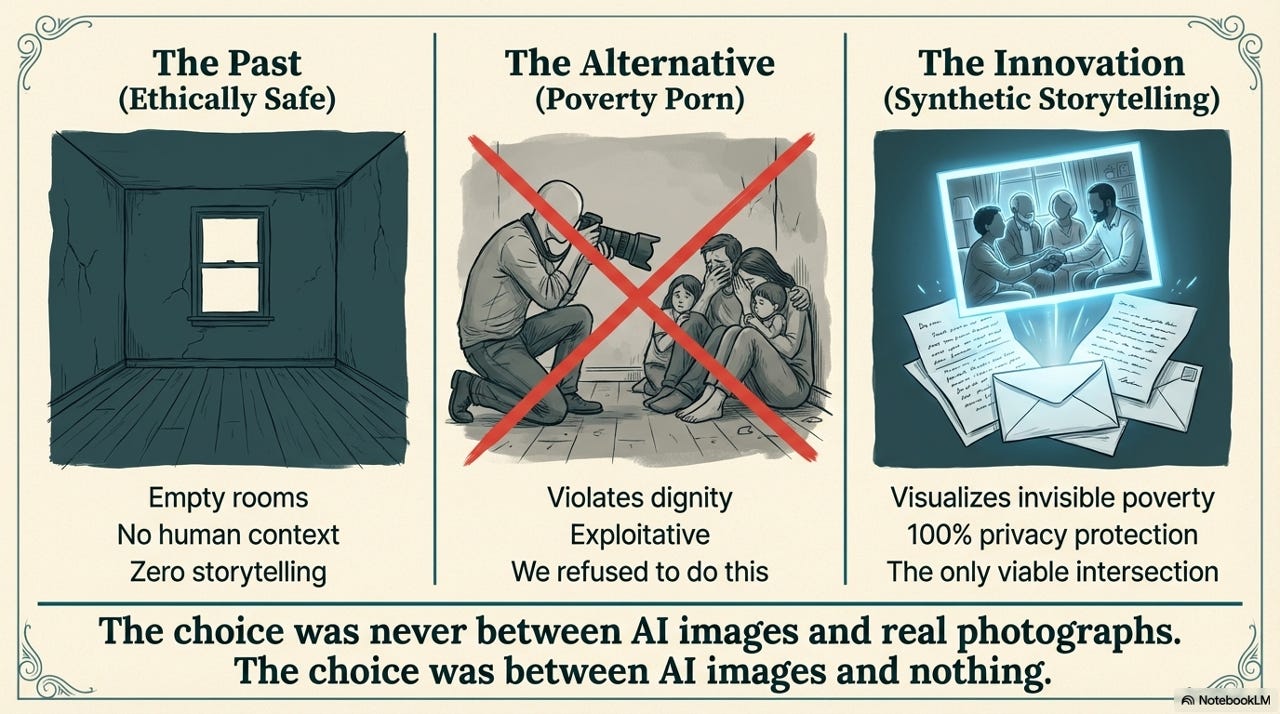

Furniture poverty is invisible. It happens behind closed doors. Families living without beds, without tables, without the basic infrastructure of a home. The only way to photograph it would be to walk cameras into those homes and document people at their lowest point.

That is poverty porn. We were not willing to do it.

We had never photographed clients in vulnerable moments. There was no consent form we were choosing to skip. There was no existing photo practice we were replacing. The images we had before were empty rooms. No human context. No story. No way for a donor to understand what it looks like when a child does homework on the floor.

What we did have was twenty years of client stories. Written by clients. Submitted voluntarily.

AI gave us a way to make those stories visible for the first time. We got estimates for a traditional photo campaign. The number came back at around $60,000, and we were warned it would probably run higher given the coordination reality. We were never going to spend that. No small charity sets aside $60,000 for a photo campaign. What we spent was $600. Not a savings. A creation. Something that was never going to happen became possible.

That is Opportunity AI. Not doing the same work for less. Doing work that was never happening because it was ethically and financially impossible.

Nearly every external analysis of this campaign gets that backwards.

Who Got It Right?

The people who understand fundraising asked the right question first. They asked: compared to what? Compared to photographing a child sleeping on clothes? Compared to empty rooms that tell no story? CharityVillage, The Philanthropist Journal, Nathan Chappell at Fundraising AI. They engaged with the problem the campaign was solving, not just the tool it used. Nathan put it in his textbook. His podcast hosts called it “a creative and transparent way of using AI that doesn’t diminish trust.”

Then there is everyone else.

Who Got It Wrong?

Stanford Social Innovation Review wrote that we “switched to AI-generated but realistic-looking images meant to evoke sympathy.” They used the word “sympathy.” As if the point was to manipulate donors instead of protect clients. They did not mention the campaign name. APRA used nearly identical language. Copy-paste. Same framing. Same omissions.

The Journal of Public Affairs Education cited us for “peril,” specifically the concern around donor transparency “when images were AI-generated without disclosure.” The campaign was called “The Picture Isn’t Real, But The Reality Is.” The disclosure was the name.

PetaPixel wrote that a Toronto charity “freely admitted” to using AI images. As if transparency is a confession. We built the entire campaign identity around telling donors exactly what we were doing and why.

The Guardian cited us as the precedent for a trend that now includes UN agencies generating fake images of sexual violence survivors. A domestic charity protecting clients from exploitation got filed next to international agencies fabricating atrocity imagery. Those are not the same act.

The Lancet Global Health published a Comment piece by Arsenii Alenichev coining the term “poverty porn 2.0.” Alenichev told The Guardian that organisations use synthetic images “because it’s cheap and you don’t need to bother with consent and everything.” He framed the protection of a client’s right to privacy as “not bothering.” As if walking a camera into a bare apartment and asking a mother to have her child pose on the floor is the morally superior option. The Lancet piece itself does not mention Furniture Bank. The journalists who reported on it made that connection for him.

The Pattern

There is a pattern in who gets it wrong. Every source that frames us as an ethical problem also omits the campaign name. Every one.

Strip the name, strip the intent.

The name was the ethics statement. The name was the disclosure. The name was the entire argument. The critics who wanted to frame this as deception had to remove it first.

The New Research

This month, the University of East Anglia published “Artificial Authenticity,” a study of 171 AI-generated images from 17 charities. Our 39 images are in the dataset. The researchers recommend that every organisation using AI-generated imagery should publish an ethical content policy specifying when AI imagery may be used, where it is not appropriate, and how outputs will be reviewed and disclosed.

We published that in May 2023. A 12-point AI Manifesto. A 12-point usage policy. Self-imposed limits: no AI video of client experiences. No AI images as substitutes for real photos of programs, clients, or team members. We referenced international frameworks because no Canadian standards existed yet. It certainly wasn’t perfect, but it certainly was progress.

Three years before the researchers said the sector needed governance, we already had it.

The UEA study surfaces a real tension I do not dismiss. Donors often prefer what researchers call an “authentic witness” over a beneficiary’s right to privacy. That is worth an honest sector conversation. But here is what the researchers miss: for Furniture Bank, there was never a photo practice to return to. We never photographed clients in crisis. We never asked families to pose in empty apartments. Before this campaign, the only images we had were bare rooms. No people. No story. No way for a donor to feel the weight of what furniture poverty actually looks like.

The choice was never between AI images and real photographs. The choice was between AI images and nothing.

The sector can debate the ethics of synthetic imagery all it wants. But the debate needs to start with the right question. Not “should charities use AI images?” but “what was happening before they did?” For us, the answer is: twenty-six years of invisible poverty. That is what we are being asked to go back to.

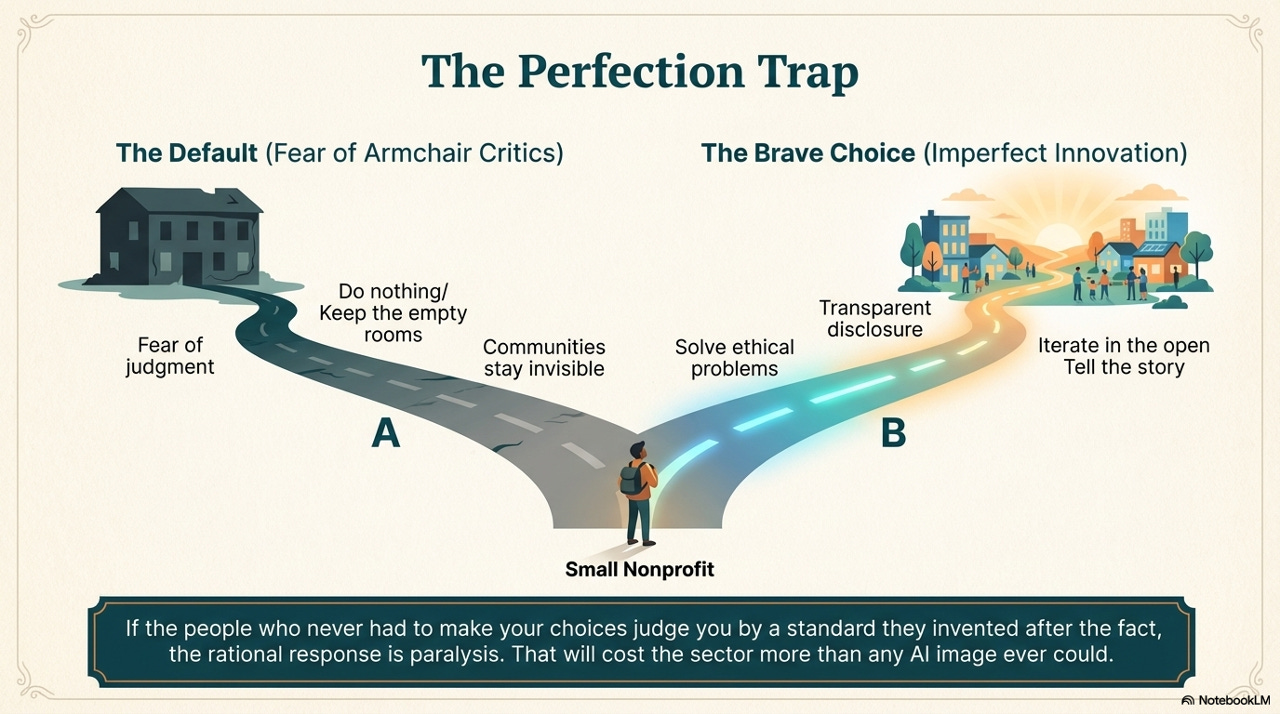

The Perfection Trap

I published a bias self-audit of our own campaign in January. I examined the racial composition of the AI-generated images because Sam Illingworth’s work on slow AI made me stop and look at something I had not examined carefully enough. I have never claimed the campaign was perfect.

But perfection was not the standard in 2022, and it should not be the standard now.

Every small nonprofit in this sector is watching how this plays out. They are reading the academic papers and the headlines, and they are learning that if you try something new, the people who never had to make your choices will judge you by a standard they invented after the fact.

That is the perfection trap. And it will cost the sector more than any AI image ever could. Because the next small charity with a genuine ethical problem to solve will look at what happened to us and decide it is safer to do nothing. To keep the empty rooms. To stay invisible. To let their communities suffer.

The campaign was called “The Picture Isn’t Real, But The Reality Is.”

Three and a half years later, the question is still the same.

Compared to what?